Facial Expression Capturing with ARKit and Facial Animation Recreation Using Blender and Unity

A Final Project Report for CPSC 8110: Technical Character Animation

This is a report I wrote for my Techinial Charater Animation class in the spring of 2019. Through this final project, I explored the possibility of facial expression capture on hand-held devices such as the iPhone. The main difference between the work this paper lays out and current state of the art facial animation solutions such as Apple’s Animoji is that this paper is merely using the iPhone as a facial data capturing device rather than the host for facial animation APIs. As a result, the captured data can be flexibly used on any platform for any purpose while maintaining the convenience of doing a capturing session on hand-held devices.

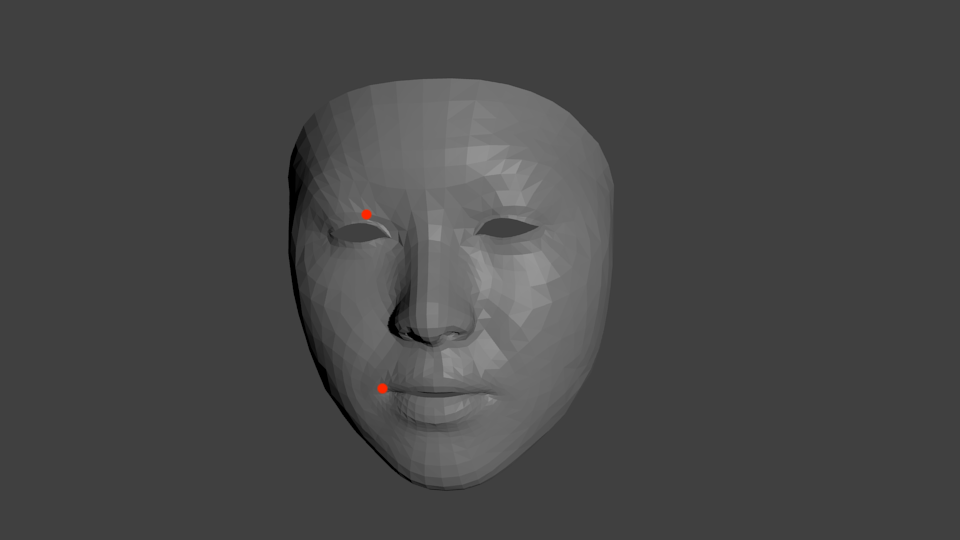

The main goal of this paper is to explain the pipeline the author built that captures human facial expression using the iPhone X and recreates those facial expression on a character model in Unity using the captured data. In addition, the author will touch on some of the design choices he made to facilitate the pipeline.

Here is the link to the paper I wrote

And here is the demo video:Go Back Home